Editor's note. This is the final issue of The Searchlight under its weekly, solo-authored format. Starting May 8, we move to biweekly publication with rotating authorship. Hernando Liu opens the new cadence with reporting from the Future Action Summit in Sydney. The framework — deep cuts, source tier discipline, one thesis per issue — does not change. The team behind it does. Thank you for reading the first nine.

The Thesis

For enterprises deploying AI at scale, the governance gap is now a larger risk multiplier than model choice, vendor choice, or technology architecture — and the procurement decision is where it becomes measurable.

The Signal

1. Governance is a claim, not a function, in most enterprises deploying AI.

What happened. In an enterprise survey of AI leaders published by VentureBeat on April 22, 72% said AI governance was in place at their organization, but only 43% could name a central accountable team; 23% said governance was contested between teams, 20% said each platform governed itself, and 6% said no one had formally addressed it.

Why it matters. "We have governance" and "someone owns it" are different statements, and the distance between them is where exposure accumulates; Grant Thornton's 2026 AI Impact Survey of 950 business leaders found 78% lack strong confidence they could pass an independent AI governance audit within 90 days, and the figure for piloting organizations is 7%.

Second-order effect. When two agents operate from different definitions of the same business metric, they produce two confident and contradictory answers — an architecture failure, not a model failure, and it compounds with every deployment.

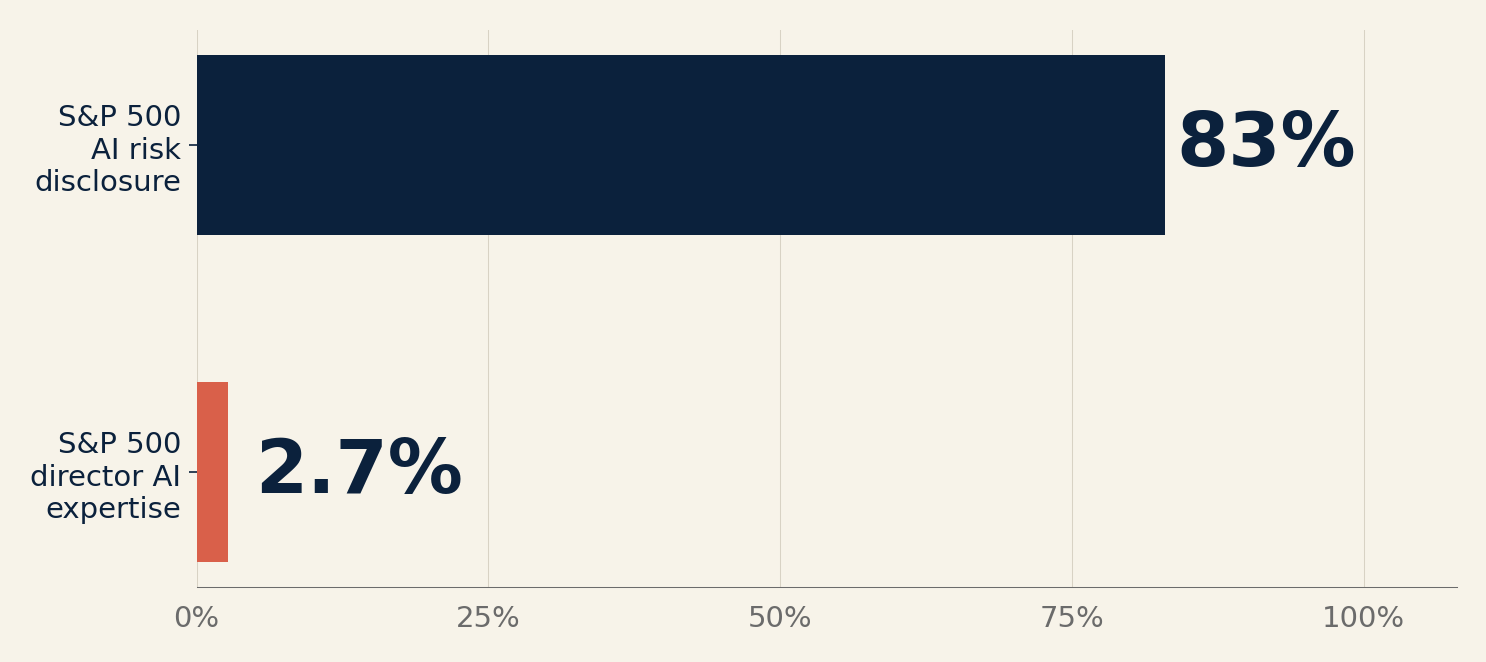

2. S&P 500 boards disclose AI as material risk 30 times more often than they seat directors who can evaluate it.

What happened. The Conference Board reported on April 22 that among S&P 500 companies, disclosure of AI as a material risk rose from 12% in 2023 to 83% in 2025, while disclosure of AI expertise among directors rose only from 1.5% to 2.7%; for comparison, disclosed technology expertise rose from 20% to 51% and cybersecurity expertise from 15% to 27%.

Why it matters. A 30x gap between risk disclosure and expertise disclosure is not a lag but a structural mismatch; audit and risk committees are increasingly the owners of AI oversight, which means the same directors who recently took on cyber, ESG, and data privacy now carry AI without corresponding training — only 23% of surveyed executives consider their board "highly fluent" in AI.

Second-order effect. Proxy-season advisors are already recommending companies disclose named director AI expertise; expect activist pressure in the 2027 cycle to force one AI-experienced director per board at companies that do not move voluntarily first.

3. Agents are entering the enterprise through productivity bundles, ahead of the governance programs built to oversee them.

What happened. Microsoft disclosed on March 9 that it has visibility into more than 500,000 agents across its own company as Customer Zero for its new Agent 365 governance product, and simultaneously launched Microsoft 365 E7 — a $99-per-user bundle combining Copilot, Agent 365, and enterprise identity — with 90% of the Fortune 500 already on Copilot and customers deploying at 35,000+ seats tripling year over year.

Why it matters. When agents arrive as features of a productivity subscription, they arrive before procurement has run an AI governance review; the relevant question for 2026 is no longer "should we buy an AI tool" but "what agents are already running in the software we renewed last quarter."

Second-order effect. The 57% of enterprises without a central AI owner are running production agents today through Copilot, Gemini Enterprise, and comparable suites; the governance architecture will be built retroactively, which is always the expensive path.

The Playbook

Five questions to ask before the next board meeting or quarterly review — specific enough to use this week.

- Name the owner. Who is the named accountable owner of AI governance across the enterprise? If the answer is "the CTO's team" or "risk management," you have a 43%-or-worse governance architecture. The answer should be a person's name with authority to set policy, define escalation thresholds, and pause deployment.

- Produce the inventory. What AI agents and AI-enabled workflows are currently in production — not piloted, in production? If no one can produce this list in 48 hours, the governance claim is theater. This is the minimum precondition for any other governance activity.

- Audit the default-on clauses. Which vendor contracts permit agents to be deployed by default as part of the subscription? Microsoft Copilot, Google Gemini Enterprise, Salesforce Agentforce, and comparable bundles now ship agents as features rather than opt-in add-ons; procurement should be reviewing contract language for default-on provisions in every renewal this quarter.

- Name the escalation path. When an AI system produces a bad outcome — a denied loan, a rejected claim, an incorrect diagnosis, an erroneous hiring screen — what is the named escalation path, with time bounds and a sign-off authority? If this is unwritten, it is not a governance process; it is an improvisation waiting to fail publicly.

- Test the board. Does the board have a director who could independently evaluate the governance claim? If the answer is "our CTO briefs us," the board is being managed, not governing. The 2.7% figure from The Conference Board is not aspirational — it is a current measurement of institutional preparedness, and it is almost certainly insufficient for any company where AI is material.

The Verification Test

- Claim: "We have AI governance in place."

- Test: Ask the named owner to produce, within 48 hours, (a) a current inventory of production AI systems, (b) the written policy for human oversight and escalation, and (c) the log of any material escalation in the past 90 days.

- Pass criteria: All three produced. Current. Internally consistent. The inventory matches what procurement and IT can independently reconstruct.

- Fail smell: The inventory is "in progress." The policy is "being updated." No escalations have been logged — not because nothing material has occurred, but because no one is reporting into the structure.

The Metric

83% vs 2.7%.

- What it measures. Share of S&P 500 companies disclosing AI as a material risk in 2025, versus share disclosing any AI expertise among their directors — a 30x ratio.

- Why it matters now. Boards are being asked to govern a category in which only 2.7% disclose any named expertise; this is the widest skill-to-disclosure gap in the 2025 proxy data, and it is what activists and regulators will point at.

- Source. The Conference Board, Governing AI: Four Practical Focus Areas for Boards, April 22, 2026.

The Lens — Horizon Search Institute

Human Performance. The governance gap is an accountability gap; the unanswered question is who is responsible when a system produces a wrong decision. [Grant Thornton]

Responsible AI. A 2.7% director-expertise figure is a concrete measurement of institutional preparedness; directors cannot govern what they cannot evaluate. [Conference Board]

Planetary Futures. Global AI governance regimes are diverging — EU AI Act, US sectoral rules, Chinese model registration — without a common reference frame for multinational enterprise compliance. [EU AI Act]

Governance and Diplomacy. When enterprises lack central AI owners, regulators fill the vacuum; procurement-side governance gaps become regulatory governance by default. [NIST AI RMF]

Links Worth Your Time

- VentureBeat — The AI governance mirage. The data behind Signal 1; worth the full read for the breakdown of "no single owner" as the #2-ranked barrier to enterprise AI governance, just behind vendor opacity.

- The Conference Board — Governing AI 2026. The primary source for the 12%-to-83% jump and the 2.7% director expertise figure; Andrew Jones's framing will anchor the proxy-season conversation.

- Microsoft — Introducing the Frontier Suite. The disclosure of 500,000+ agents inside Microsoft is the most useful data point on agent proliferation in any enterprise in 2026; read past the product-launch framing.

- Harvard CorpGov — 2026 Proxy Season Considerations. White & Case's practical guidance on how boards should now disclose AI expertise; the Fortune 100 figure jumped from 26% to 44% in a single year — the S&P 500 follows.

- EY — Technology Pulse Poll, February 2026. Complementary to Signal 1; the centralized-to-federated governance migration among tech companies is a useful preview of where other sectors will go next.

Sources

Taryn Plumb, "The AI governance mirage: why 72% of enterprises don't have the control and security they think they do," VentureBeat, April 22, 2026. venturebeat.com

The Conference Board, "In the S&P 500, Disclosure of AI Risks Surges from 12% to 83%," April 22, 2026. conference-board.org

Microsoft, "Introducing the First Frontier Suite built on Intelligence + Trust," March 9, 2026. blogs.microsoft.com

White & Case, "Key Considerations for the 2026 Annual Reporting and Proxy Season: Proxy Statements," Harvard Law School Forum on Corporate Governance, April 19, 2026. corpgov.law.harvard.edu

Grant Thornton, "2026 AI Impact Survey Report," April 2026. grantthornton.com

EY, "Technology Pulse Poll: Autonomous AI adoption surges as oversight falls behind," March 19, 2026. ey.com

ISS-Corporate, "Roughly One-Third of Large U.S. Companies Now Disclose Board Oversight of AI," March 19, 2025. issgovernance.com

Heidrick & Struggles, "Top 5 Corporate Governance Priorities for 2026," April 7, 2026. corpgov.law.harvard.edu

The Searchlight is a biweekly briefing beginning May 8, 2026. It is not investment advice. Views are the editor's own.