The Thesis

Enterprise AI became a metered utility the same week Stanford documented a 50-point confidence gap between AI insiders and the public, making this quarter's procurement decision also a political one.

The Signal

1. Anthropic ends flat-fee enterprise billing.

What happened. Anthropic restructured its enterprise plan on April 14 to bill Claude, Claude Code, and Cowork usage separately from seat fees, moving its biggest customers to per-token pricing at standard API rates; grandfathered terms expire at next contract renewal.

Why it matters. The flat-fee subscription that underwrote enterprise AI adoption since 2023 is structurally dead: compute costs have finally caught up to the demand curve, and every vendor with a seat-based agentic product — OpenAI's Codex, GitHub Copilot, Windsurf — is already under the same pressure.

Second-order effect. Multi-vendor procurement, open-weight model evaluation, and observability on token burn move from optional hedges to required capabilities in the next enterprise AI contract cycle.

2. Stanford documents a 50-point confidence gap between AI insiders and the public.

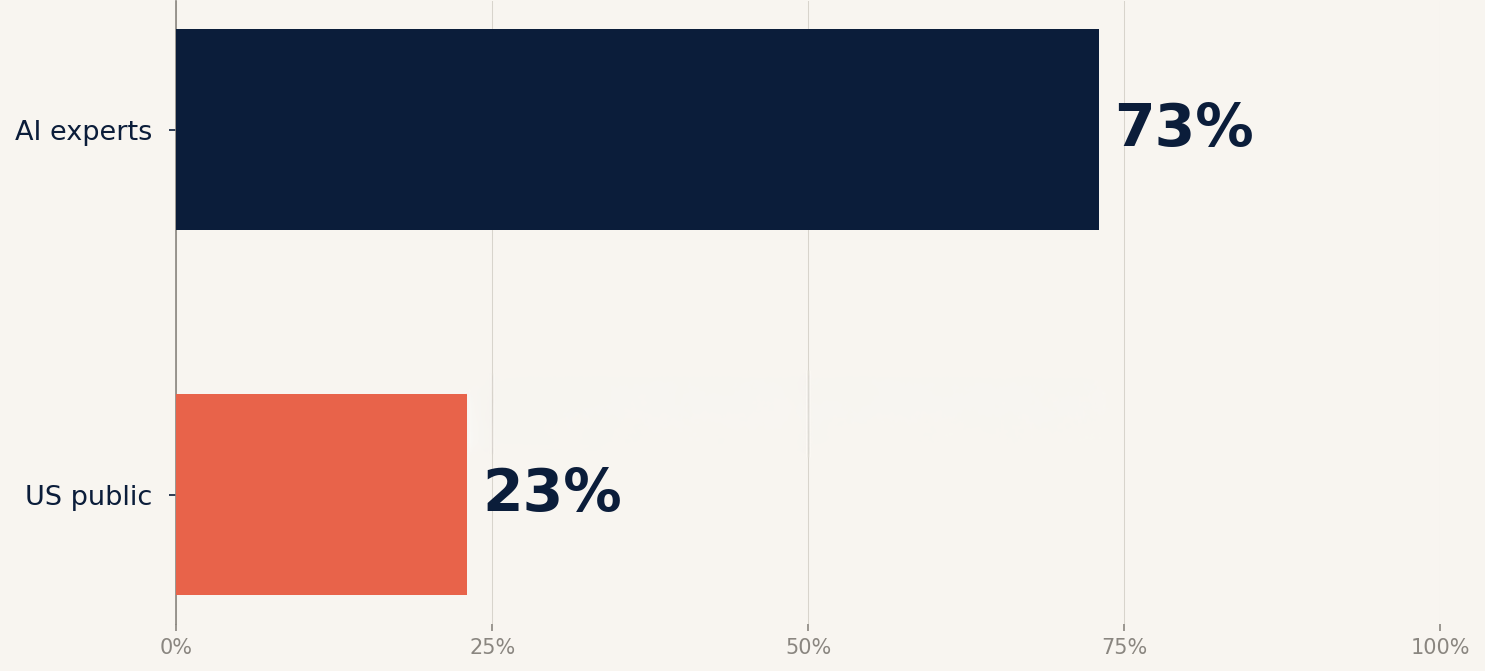

What happened. Stanford HAI released its 2026 AI Index on April 13, reporting that 73% of AI experts expect a positive impact on how people do their jobs versus only 23% of the US public, with the US reporting the lowest trust in its own government to regulate AI of any country surveyed (31%).

Why it matters. Technology policy follows constituent opinion, not expert consensus, and the Molotov cocktail thrown at Sam Altman's San Francisco home the week before the report dropped is what that gap looks like at the tail end.

Second-order effect. Regulatory fragmentation accelerates as US states move ahead of a federal framework that commands no political base; cross-border enterprises should budget for compliance overhead, not compliance reduction.

3. PwC finds 20% of firms capture 74% of AI's economic value.

What happened. PwC's 2026 AI Performance Study, released April 13, surveyed 1,217 senior executives across 25 sectors and found that 74% of AI's economic value sits with just 20% of companies; leaders are 1.7x more likely to have a Responsible AI framework and 1.5x more likely to have a cross-functional AI governance board.

Why it matters. Governance infrastructure has crossed from compliance cost to profit driver: the companies with formal AI risk frameworks are the ones actually realizing return on AI investment, not the ones still pushing it off as a legal afterthought.

Second-order effect. "Pilot purgatory" becomes a compounding disadvantage, and the Responsible AI function becomes a CFO-adjacent role rather than a compliance one.

The Playbook

Enterprise AI procurement in the post-flat-fee era — a 5-step checklist.

- Audit your renewal calendar. Map every AI vendor contract by renewal date. Anthropic's grandfathered flat-fee terms expire at renewal, not at some future industry deadline. Identify the next six months of exposure.

- Demand committed unit economics. Require written rate cards for token, seat, and agent pricing at 1x, 2x, and 3x current usage over 24 months. If the vendor will not commit, you do not have a vendor; you have a subsidy waiting to end.

- Instrument before you scale. Deploy observability on token burn, cache hit rate, and agent runtime for every production workload this quarter. You cannot negotiate what you cannot measure.

- Build a second path. Maintain a working integration with at least one alternative frontier model and one open-weight model. Switching capability is now a governance KPI, not an engineering preference.

- Write the governance memo this quarter. PwC's data is clean: Responsible AI frameworks correlate with 74% of the sector's realized value. If you do not have one in place by Q3, you are the laggard in somebody else's consulting deck.

The Verification Test

- Claim: "Our AI vendor's pricing is stable for the contract term."

- Test: Ask for written commitment on rate-card changes, cache TTL behavior, session caps, and throughput over the remaining term, with defined remediation if conditions change.

- Pass criteria: A signed amendment naming specific rate floors, cache durations, and throughput guarantees, with a credit mechanism if the vendor moves unilaterally.

- Fail smell: "We work with our largest customers individually" or "we wouldn't do that to you." Both mean there is nothing on paper.

The Metric

73% vs 23%.

- What it measures. Share of AI experts versus the US public who expect AI to have a positive impact on how people do their jobs.

- Why it matters now. A 50-point gap between the people building AI and the people living with it is the political backdrop for every enterprise AI procurement decision this year; vendor scrutiny rises in proportion.

- Source. Stanford HAI, 2026 AI Index Report, April 13, 2026.

The Lens — Horizon Search Institute

Human Performance. Colorado and California now legally define "neural data"; the FTC's MIND Act directs a one-year study of consumer neurotech privacy gaps. [Cooley]

Responsible AI. PwC finds AI leaders are 1.7x more likely to have a formal Responsible AI framework; governance has become the ROI variable. [PwC]

Planetary Futures. The IEA revised 2026 global data center electricity demand to 1,100 TWh, up 18% from December, equal to Japan's annual consumption. [IEA]

Governance and Diplomacy. The US reports the lowest trust (31%) in its own government to regulate AI of any country surveyed; Singapore leads at 81%. [Stanford HAI]

Links Worth Your Time

- MIT Technology Review — Charts on the state of AI in 2026. The fastest visual entry into Stanford's 400-plus-page index.

- Brookings — Global energy demands within the AI regulatory landscape. Situates the compute crunch inside international governance frameworks.

- IEEE Spectrum — Stanford's 2026 AI Index trends. The most readable technical summary of the index, with charts worth saving.

- ITIF — Four reasons AI data centers won't overwhelm the grid. A credible counter-narrative to peak-doom infrastructure commentary.

- Implicator.ai — The flat-fee era is over. The clearest primary-source walkthrough of Anthropic's pricing changes for buyers.

Sources

Anthropic, updated enterprise help documentation, April 14, 2026. anthropic.com

Implicator.ai, "Anthropic shifts enterprise billing to per-token pricing; the flat-fee era is over," April 14, 2026. implicator.ai

OpenAI, Codex pricing and release notes, April 2026. releasebot.io/updates/openai

Stanford Institute for Human-Centered Artificial Intelligence, 2026 AI Index Report, April 13, 2026. hai.stanford.edu

Sarah Perez, "Stanford report highlights growing disconnect between AI insiders and everyone else," TechCrunch, April 13, 2026. techcrunch.com

PwC, "Three-quarters of AI's economic gains are being captured by just 20% of companies," press release, April 13, 2026. pwc.com

Anthony Ha, "Anthropic's rise is giving some OpenAI investors second thoughts," TechCrunch (citing Financial Times), April 14, 2026. techcrunch.com

Cooley LLP, "Neurotechnology progress fuels urgency of neural data privacy regulation," Medtech Insight, December 22, 2025. cooley.com

International Energy Agency, "Energy demand from AI," Energy and AI report, 2026. iea.org